Eye Tracking Reveals the Unconscious Know-How Hidden in Expert Performance

You probably can’t explain how you ride a bike. Not properly, anyway. You could tell a beginner where to sit, when to push off, roughly how the gears work. But the subtle business of how hard to pedal to stay upright, when to shift your weight through a turn, the thousand tiny corrections your body makes without consulting you first? Good luck putting any of that into words.

This kind of implicit skill, the stuff we know but can’t articulate, has a name: tacit knowledge. The philosopher Michael Polyani coined the idea in the mid-20th century, summing it up as “we know more than we can tell.” And it crops up everywhere, from a glassblower’s feel for molten material to a radiologist’s knack for spotting a tumour on an X-ray. The trouble is, because experts can’t explain what they’re doing differently, passing that expertise along usually requires years of apprenticeship and practice. There’s no shortcut. Or there wasn’t.

A team of engineers at MIT has now found a way to pull tacit knowledge out of people’s heads, more or less, by watching where their eyes go and what their brains are up to. In a study published in the Journal of Neural Engineering, Alex Armengol-Urpi and colleagues tracked volunteers’ eye movements and brain activity while they learned an image classification task. The results showed that as people got better at the task, their gaze and attention quietly shifted towards the most useful part of each image, even though the volunteers themselves had no idea they were doing it. More striking still: when the researchers showed people evidence of their own unconscious habits, their performance jumped again.

The experiment itself was fairly straightforward, if a bit sneaky. Thirty volunteers were shown a sequence of more than 120 images, each containing two simple shapes (squares, triangles, circles) on either side, decorated with various colours and patterns. The volunteers had to sort each image into group A or group B based on some hidden rule involving the combination of shape, colour and pattern. Crucially, only one side of each image actually mattered for the classification. The other side was noise.

Nobody told the participants any of this. For the first stretch of the experiment they were basically guessing, getting it wrong about as often as right. But gradually, over dozens and dozens of images, their accuracy crept up.

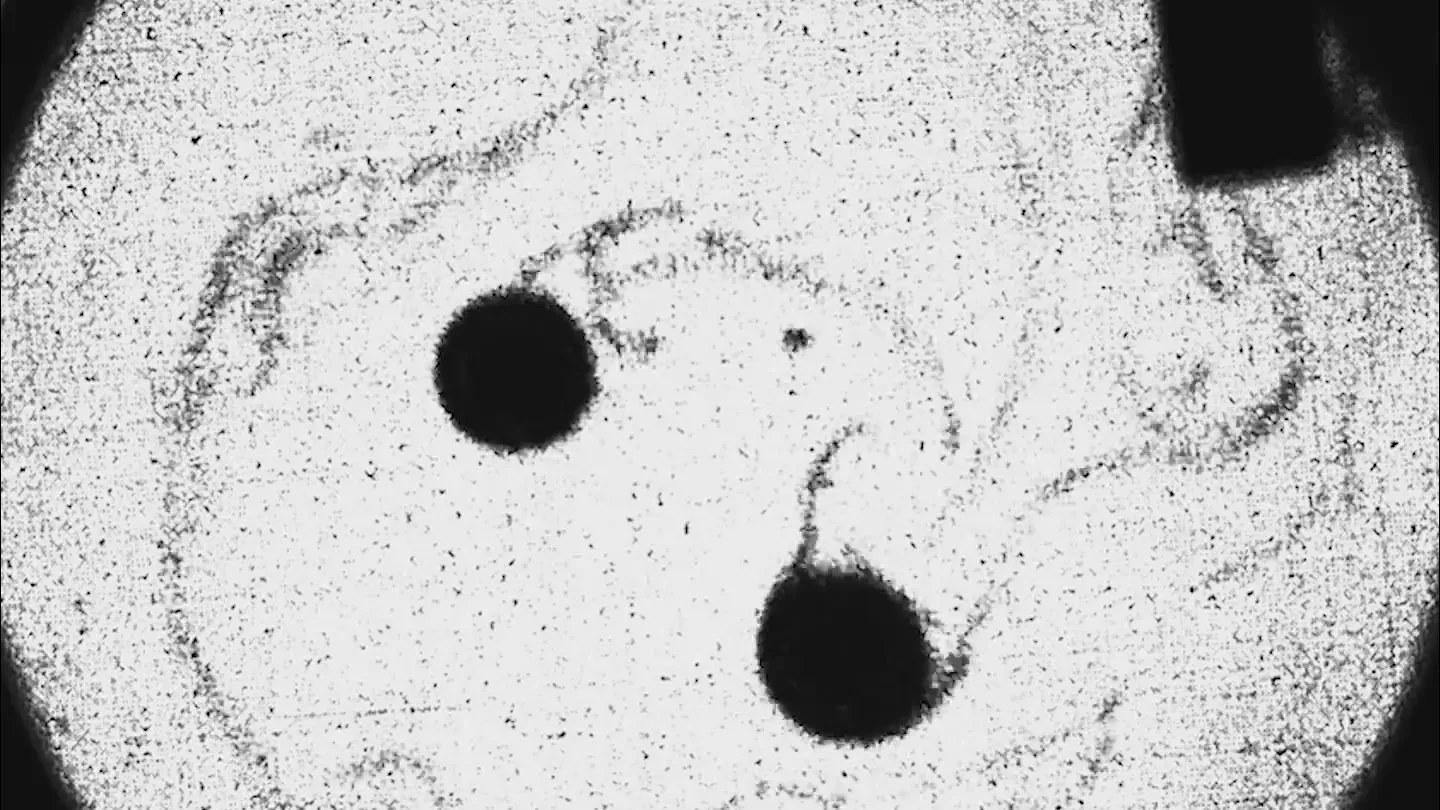

Here’s where it gets interesting. The team used eye-tracking cameras to follow each volunteer’s gaze, and fitted them with EEG sensors to record brain activity. They’d designed the shapes to flicker at different (imperceptible) frequencies, which meant they could work out where a person’s cognitive attention was directed by checking which flicker frequency their brainwaves had synced up with. Clever trick. It gave the researchers two independent windows into attention: where the eyes pointed, and where the brain was actually focused.

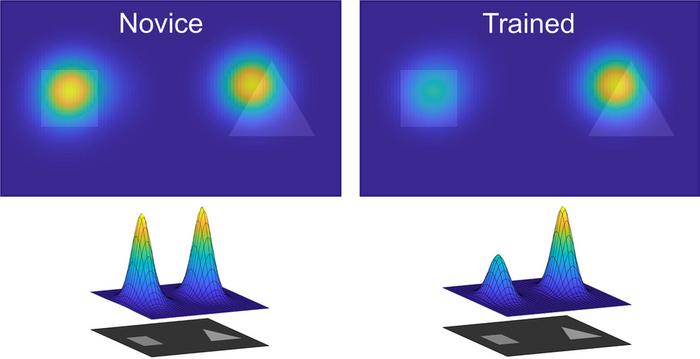

As participants improved, both measures told the same story. Early on, people looked at the whole image, scanning both sides more or less equally. By the end, their gaze and their brain activity had drifted towards the relevant side, the one that held the actual classification clue. “They were unconsciously focusing their attention on the part of the image that was actually informative,” says Armengol-Urpi, a research scientist in MIT’s Department of Mechanical Engineering. “So the tacit knowledge they had was hidden inside them.”

And the volunteers genuinely didn’t know. When surveyed afterwards, they consistently said they’d been looking at the whole image throughout. Their conscious account of what they were doing bore almost no resemblance to what the cameras and EEG caps had recorded.

The team then tried something rather neat. They showed each participant heat maps of their own gaze patterns, laying out how their attention had shifted from the novice phase to the expert phase. Armed with this new self-awareness, the volunteers tackled another set of images. Their accuracy improved further, outperforming a control group of equally trained participants who hadn’t been given the feedback. Making the tacit explicit, it seems, gave them an extra edge.

It’s worth noting the limits here. This was a lab-based image sorting task, not surgery or cricket or anything with real-world messiness baked in. But Armengol-Urpi reckons the principle should travel. He’s already exploring tacit knowledge in glassblowing and table tennis, as well as in medical imaging diagnosis. “We believe the underlying principle … can generalize to a wide range of perceptual and skill-based domains,” he says.

The implications are tantalising, if still somewhat speculative. In medicine, junior radiologists might learn to spot pathologies faster if they could see how experienced colleagues unconsciously direct their gaze. In sports coaching, athletes could be shown the attentional habits that separate good from great. And in skilled trades, the kind of expertise that currently takes years of watching and doing might (just might) be compressed, codified and handed over in a fraction of the time. “The tacit knowledge is what we cannot verbalize, that’s hidden in our unconscious,” says Armengol-Urpi. “If we can make that knowledge explicit, we can then allow for it to be transferred easier, which can help in education and learning in general.”

Journal reference: Journal of Neural Engineering, DOI: 10.1088/1741-2552/ae3eb8

Related

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.